GOAL: Generating 4D Whole-Body Motion for Hand-Object Grasping

Teaser

TeaserAbstract

Generating digital humans that move realistically has many applications and is widely studied, but existing methods focus on the major limbs of the body, ignoring the hands and head. Hands have been separately studied but the focus has been on generating realistic static grasps of objects. To synthesize virtual characters that interact with the world, we need to generate full-body motions and realistic hand grasps simultaneously. Both sub-problems are challenging on their own and, together, the state-space of poses is significantly larger, the scales of hand and body motions differ, and the whole-body posture and the hand grasp must agree, satisfy physical constraints, and be plausible. Additionally, the head is involved because the avatar must look at the object to interact with it. For the first time, we address the problem of generating full-body, hand, and head motions of an avatar grasping an unknown object. As input, our method, called GOAL, takes a 3D object, its position, and a starting 3D body pose and shape. GOAL outputs a sequence of whole-body poses using two novel networks. First, GNet generates a goal whole-body grasp with a realistic body, head, arm, and hand pose, as well as hand-object contact. Second, MNet generates the motion between the starting and goal pose. This is challenging, as it requires the avatar to walk towards the object with foot-ground contact, orient the head towards it, reach out, and grasp it with a realistic hand pose and hand-object contact. To achieve this the networks exploit a representation that combines SMPL-X body parameters and 3D vertex offsets. We train and evaluate GOAL, both qualitatively and quantitatively, on the GRAB dataset. Results show that GOAL generalizes well to unseen objects, outperforming baselines. A perceptual study shows that GOAL’s generated motions approach the realism of GRAB’s ground truth. GOAL takes a step towards synthesizing realistic full-body object grasping. Our models and code are available for research purposes.

What is GOAL?

GOAL is a generative model that generates full-body motion of the human body that walks and grasps unseen 3D objects. GOAL consists of two main steps:

- GNet generates the final grasp of the motion.

- MNet generates the motion from the starting to the grasp frame. It is trained on the GRAB dataset. For more details please refer to the Paper or the project website.

GOAL generates diverse motions to reach different goal locations around the human.

GNet

Below you can see some generated whole-body static grasps from GNet. The hand close-ups are from the same grasp, and for better visualization:

| Apple | Binoculars | Toothpaste |

|---|---|---|

|  |  |

|  |  |

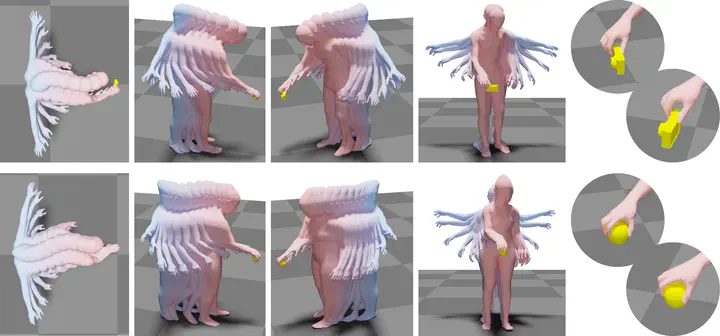

MNet

Below you can see some generated whole-body motions that walk and grasp 3D objects using MNet:

| Camera | Mug | Apple |

|---|---|---|

|  |  |

For more details check out the YouTube video below.

Data and Code

Please register and accept the License agreement on GRAB website to get access to the GRAB dataset.

Citation

@inproceedings{taheri2022goal,

title = {{GOAL}: {G}enerating {4D} Whole-Body Motion for Hand-Object Grasping},

author = {Taheri, Omid and Choutas, Vasileios and Black, Michael J. and Tzionas, Dimitrios},

booktitle = {Conference on Computer Vision and Pattern Recognition ({CVPR})},

year = {2022},

url = {https://goal.is.tue.mpg.de}

}

@inproceedings{GRAB:2020,

title = {{GRAB}: {A} Dataset of Whole-Body Human Grasping of Objects},

author = {Taheri, Omid and Ghorbani, Nima and Black, Michael J. and Tzionas, Dimitrios},

booktitle = {European Conference on Computer Vision ({ECCV})},

year = {2020},

url = {https://grab.is.tue.mpg.de}

}